The gig economy in India is frequently framed as a great equaliser. With its low barriers to entry and the promise of being your own boss, it has become the new frontier of urban employment, projected to reach 23.5 million workers by 2030 (NITI Aayog, 2022). In the transport and delivery sectors, platform giants now command a workforce larger than Indian Railways (Ministry of Railways, 2020). Yet this digital transformation is unfolding across a stark demographic void: whilst women make up roughly 10 per cent of the global app-based transport workforce, in India that figure languishes below 1 per cent (ILO, 2021b).

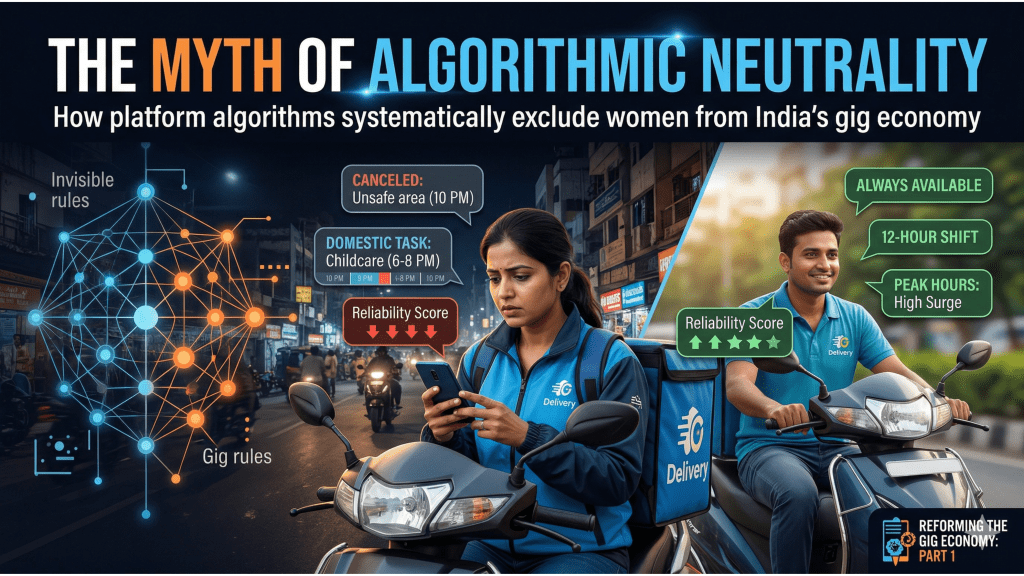

This disparity is not a consequence of a lack of interest or skill. It is the outcome of what researchers have termed a male-as-default design logic, one in which the architecture of digital labour platforms is built around the implicit assumption that the ideal worker is unencumbered, mobile, and available at all times (SafetiPin, 2025). To move beyond a descriptive understanding of this exclusion, we must analyse the algorithmic structures and institutional incentives that systematically produce it. In this first blog of our series, we examine how the algorithm, the invisible boss of the gig economy, shapes the reality of work for women.

Image Source: Observer Research Foundation

Fallacy of Neutrality

In the world of app-based work, the algorithm is the ultimate manager. It assigns tasks, monitors performance, and determines earnings, all without a human face. To the platforms that deploy them, these systems are presented as gender-neutral mathematical models that treat every worker, or partner, identically, on the basis of objective data.

However, as a growing body of research makes clear, this supposed neutrality is a fallacy. As the scholar Virginia Eubanks (2018) has argued, automated systems do not simply reflect a neutral world; they encode and reproduce the inequalities of the world in which they were designed. When a system is built around an ideal worker, someone with no domestic responsibilities, total geographic mobility, and round-the-clock availability, it inadvertently constructs a barrier against those whose lives do not fit this profile.

For women in India, the gig economy’s algorithms do not simply manage work; they penalise the social and safety realities that women navigate daily.

The architecture of the platform is not a neutral grid; it is a gendered one (ORF, 2020).

The Reality of Unpaid Labour

At the heart of most transport and delivery platforms is an incentive structure designed to ensure a constant, predictable supply of labour. Algorithms reward workers who log long, continuous hours and remain active during high-demand peak windows (ORF, 2020). These systems are calibrated for availability, and availability, in the platform economy, means being perpetually online.

For many male workers, whose domestic needs are frequently managed by women in the household, maintaining a 12-hour shift is principally a matter of physical stamina. For women, time is not a linear resource (Ghosh, A., Ramachandran, R., & Zaidi, M., 2021). According to the International Labour Organisation, Indian women spend an average of five hours more per day on unpaid care work than men, one of the highest such differentials in the world (ILO, 2018). This double burden creates fragmented work schedules that the platform’s algorithm is structurally unable to accommodate.

When a woman logs off to provide unpaid labour at home, the algorithm registers an absence of paid labour and considers the worker inactive. Consequently, women workers face lower reliability scores that may reduce their visibility for high-value bookings. A study by the Oxford Internet Institute (2021) found that women on gig platforms consistently received lower algorithmic scores not because of inferior performance during their active windows, but because of the structural incompatibility between care responsibilities and platform availability requirements. The score itself becomes a mechanism of exclusion.

Safety as a Metric Failure

A second and equally significant friction point lies in the tension between acceptance rates and personal safety. To maintain market efficiency, platforms penalise workers who frequently reject or cancel bookings. High cancellation rates can trigger what practitioners call algorithmic cooling-off periods, during which a worker is temporarily deprioritised by the system, or, in extreme cases, account deactivation (SafetiPin, 2025).

For women, these rejections are rarely a matter of preference. They are a survival strategy. A female driver may decline a ride that terminates in an unlit, peripheral neighbourhood after dark, or cancel a booking if a passenger’s behaviour during the pre-trip interaction feels threatening (Oxford Economics, 2024). These are rational decisions grounded in a legitimate and well-documented safety calculus. National Crime Records Bureau data consistently show that women face heightened risks of harassment and violence in public spaces, particularly at night and in areas with low footfall. Yet the algorithm processes these safety-driven decisions as service failures.

Image Source: The Guardian

The result is a feedback loop that compounds disadvantage. Women who work within a narrower window of safe hours and geographies receive lower performance scores, which reduces their access to high-value bookings, which suppresses their earnings, which in turn raises the question of whether participation in the platform is economically worthwhile. Attrition follows not from a lack of commitment, but from a rational response to a system that has been inadvertently designed to penalise caution (Ghosh, A., Ramachandran, R., & Zaidi, M., 2021).

Neutrality Produces a Gendered Gap

The gender pay gap in the gig economy is rarely the result of different base rates between male and female workers.

It is, rather, the cumulative result of how rewards and surge pricing are structured in relation to time.

The highest surge pricing on most transport and delivery platforms occurs during morning and evening rush hours. These are precisely the times when unpaid care responsibilities, cooking, childcare, school runs, and eldercare, are at their most intensive for women (Fu, X., Avenyo, E. & Ghauri, P., 2021). A study by researchers at the University of Chicago (Chen et al., 2019), which analysed Uber driver earnings in the United States, found that men earned approximately 7 per cent more per hour than women on the same platform, with a significant share of that gap attributable to the hours during which men chose to work, namely the high-surge periods that women’s domestic responsibilities placed out of reach.

Similarly, many platforms offer tiered bonuses for completing a high volume of trips within a defined period, such as completing 50 rides in a week to unlock an additional payment. Because women tend to work fewer or more fragmented hours owing to what researchers term time poverty, they rarely reach these upper-tier milestones (ILO, 2021). The effective hourly rate for women is therefore significantly lower than that of their male counterparts, even when the nominal rate is identical. What is presented as a universal incentive structure is, in practice, one calibrated to the life patterns of a male workforce (SafetiPin, 2025).

What Needs to Change

It is worth pausing to ask why, given the mounting evidence, platforms have not moved to address these structural biases. The answer is not simply indifference. The myth of neutrality provides regulatory cover. When a system is presented as a mathematical model rather than a policy choice, responsibility for its outcomes becomes diffuse.Hence, a shift required in the form of a reorientation from gender-neutral to gender-aware algorithmic management. Three reforms are essential.

1) Safety-Weighted Performance Metrics

Platforms must decouple safety-based cancellations from performance ratings. Algorithms should be redesigned to recognise justifiable cancellations, such as trips to destinations flagged as high-risk by publicly available safety data, or rides preceded by reported passenger harassment. Allowing workers to set preferred operating zones without penalty would enable women to remain active in areas where they feel secure, rather than forcing them to choose between safety and algorithmic standing (SafetiPin, 2025).

2) Care-Sensitive Scheduling and Log-Off Protections

Rather than rewarding only continuous activity, algorithms should be adjusted to accommodate planned fragmentation. This means protecting a worker’s reliability score when she logs off during consistent caregiving windows, provided she maintains quality of service during her active hours. Incentive structures should shift from raw volume metrics to consistency and quality indicators, allowing those with care responsibilities to compete on a more level playing field. Pilot programmes of this kind, trialled by a small number of platforms in South-East Asia, have shown promising results in both retention and worker satisfaction (Fairwork Foundation, 2023).

3) Integrated Safety Intelligence in the Driver Interface

Platforms should integrate publicly available urban safety data into the driver-side interface, providing workers with accessible, real-time information about the safety profile of a destination before they accept a booking. This would empower women to make informed decisions without relying on last-minute cancellations, thereby reducing the frequency of the very actions that the algorithm currently penalises as service failures (ORF, 2020).

Conclusion

The myth of neutrality allows platforms and regulators alike to avoid confronting the structural barriers women face. If the algorithm rewards only the worker who can behave like a machine, unencumbered by care, safety concerns, or the realities of public space in India, it will continue to exclude the very women who hold our society together.

Platforms do not simply need to onboard more women. They need to redesign the system into which women are being onboarded. A gig economy that genuinely includes women is not one that tolerates their presence; it is one that is built with their lives in view.

Leave a comment